How Generative AI Could Change Pharmaceutical Manufacturing

A conversation with Paul Hanson, Takeda Pharmaceuticals

The real promise for generative artificial intelligence in the pharmaceutical industry might resemble how it’s aiding other sectors. It’s less about stunning breakthroughs and more about whittling down mundane daily tasks for humans by handing over some of them to computers.

Paul Hanson, Takeda’s head of life cycle management, innovation, and strategy, and an active member of the International Society for Pharmaceutical Engineering (ISPE), made the case for GenAI as a helper during the 2024 ISPE Europe Annual Conference earlier this year.

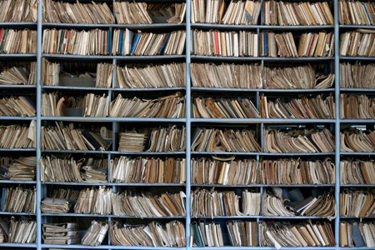

In his talk titled “How Generative AI is Impacting Pharmaceutical Manufacturing,” he used the words “frictionless access,” which should resonate with anyone who has ever stumbled through a file directory searching for a document.

We caught up with Hanson after the conference and asked for a recap. Here’s what he told us.

You made an example of how the steel industry is using generative AI to help technicians complete work orders faster. How does that translate to manufacturing complex molecules?

Hanson: U.S. Steel is enabling their maintenance teams with transparent, frictionless access to documentation relevant for their daily activities. I see a similar opportunity for complex molecular manufacturing.

As one example, giving workers on the floor frictionless access to process knowledge should enable them to rapidly resolve process-related deviations.

You outlined a few big risks in your talk, including data breaches, flawed outputs, and an erosion of public trust if AI systems fail extravagantly. What are some mitigating strategies to avoid those? Will a human minder double-checking everything remain forever necessary?

Hanson: There are a couple of strategies for mitigating those risks. The first is quality; ensuring the data being used by the models and presented to end users meets data integrity standards is essential.

Second, ensure the models are appropriate for their intended use by applying appropriate levels of controls through development, qualification, and post-launch life cycle management. Finally, methods such as retrieval augmented generation allow companies to apply GenAI to their structured and unstructured data in isolation and thus minimize leakage of proprietary information.

With regard to the need for a human minder, at this point, the human/machine learning relationship has to be governed by the risk of a model's recommendation to the impact on the patient.

As our quality systems co-evolve with these models, e.g., continuous process verification, the human touch points will decrease but are unlikely to diminish to zero. This is a good outcome because, at the end of the day, we as manufacturers are directly connected to our patients through our decisions that ensure their product is safe, pure, and efficacious. That connection to patients reinforces our commitment to the delivery of quality products and their supporting processes.

Do you believe the potential benefits outweigh the risks? Explain.

Hanson: The answer to this question is predicated on that last word: risks. In this context, the relevance of the quality maturity model increases dramatically for companies. Specifically, their maturity becomes a differentiator for ensuring the success of these machine learning models (e.g., knowledge management).

The friction in achieving the value proposition of these GenAI models will naturally decrease as organizations progress along the quality maturity model path.

One example of the pull for these systems in manufacturing complex molecules comes from the fact that over the next six years or so, roughly 30 million workers will be retiring. There are not enough workers coming into the system to replace them, so industry needs tools to close the knowledge gap. GenAI is one tool for helping structure the knowledge for the next generation of workers, who, by the way, are already using these knowledge-based models on a frequent basis.

How do quality by design methodologies fit into the AI discussion?

Hanson: I think the translation of quality by design to GenAI is really exciting because QbD is a control framework that is fundamentally understood by health authorities. Translating QbD to GenAI means starting with the intended purpose of the machine learning model then characterizing how the different inputs (i.e., structured and unstructured data) affect its performance.

Once in production, the model's performance will be further characterized to ensure its performance remains as expected as the associated manufacturing knowledge base increases. If the performance deviates from expectation then a deeper characterization of the underlying model becomes necessary to understand how the increased scale of input is affecting performance. Once the performance returns to alignment with expectation, the updated model can be released for broader application in the organization. This virtuous cycle ensures the understanding of the model's performance grows, remains explainable, and defines the knowledge "operating space" for users.

Can you prescribe a few small first steps biotech companies can take to introduce AI into their processes?

Hanson: First, start by defining a small, low-risk, and specific use case. There should be a tangible result being created. Next, characterize the performance of the GenAI within the workflow of your use case. For example, if you're using GenAI to summarize technical reports, assign the GenAI a variety of different personas and ask for different levels of detail to understand the results across different domains (e.g., accuracy, readability).

Then, use the results to establish what has been described as the jagged frontier of knowledge. The shape of this frontier will ultimately define the boundaries of trust given to the model's capabilities. Next, regularly publish the results within your user base to ensure people remain up to speed about the limit of trust they can have with the model. Finally, make sure users have a way to provide feedback about their experiences. Their voice is important for understanding at-scale experience with the model and minimizing the risk of blind spots developing with regard to system performance.

About The Expert:

Paul Hanson is the head of life cycle management, innovation, and strategy at Takeda Pharmaceuticals, where he has worked since 2007, and an active ISPE member. He started in the research and development bioprocess development group working on late-phase assets that transitioned into commercial products. Paul moved with those products to lead a newly formed biologics group in commercial technical operations. With Takeda's purchase of Shire in 2019, Paul took on a new role as head of life cycle management, innovation, and strategy within the recently created Global Manufacturing Sciences organization. In this role, he has been leading a diverse group working in global knowledge management domains such as material qualification, dedicated manufacturing investigators, and more recently, the qualification and application of artificial intelligence in manufacturing business processes.

Paul Hanson is the head of life cycle management, innovation, and strategy at Takeda Pharmaceuticals, where he has worked since 2007, and an active ISPE member. He started in the research and development bioprocess development group working on late-phase assets that transitioned into commercial products. Paul moved with those products to lead a newly formed biologics group in commercial technical operations. With Takeda's purchase of Shire in 2019, Paul took on a new role as head of life cycle management, innovation, and strategy within the recently created Global Manufacturing Sciences organization. In this role, he has been leading a diverse group working in global knowledge management domains such as material qualification, dedicated manufacturing investigators, and more recently, the qualification and application of artificial intelligence in manufacturing business processes.